Studies on television sound often point out that the meaning of television is largely determined by the soundtrack rather than the images. For example, in his 1982 book Visible Fictions John Ellis argues that television employs sound “to ensure a certain level of attention, to drag viewers back to looking at the set” (Ellis 1982: 128). Sound is more important for television, in other words, because it anchors the meaning of televisual texts. This argument has often been echoed in subsequent studies on television sound. For example, Rick Altman’s 1986 essay “Television/Sound” similarly claims that television employs sound to draw attention away from “surrounding objects of attention” (Altman 1986: 50), and the techniques used to structure this appeal were inherited from earlier sound practices developed for radio—another medium that was received in the home and that was forced to compete with household distractions for the listener’s attention. Subsequent critics have continued to endorse this notion of television as radio with pictures. For example, Michel Chion describes a similar distinction between film and television in his 1990 book Audio-Vision, in which he argues that “in the cinema everything passes through an image,” while “sound, mainly the sound of speech, is always foremost in television” (Chion 1994: 157-158). Chion also argues that television sound “does not need the image to be identified” (Chion 1994: 157), which explains why “silent television is inconceivable, unlike cinema” (Chion 1994: 165). He thus concludes that “television is fundamentally a kind of radio, ‘illustrated’ by images” (Chion 1994: 165). Herbert Zettl’s 2005 textbook on media aesthetics, Sight Sound Motion, similarly argues that “television is definitely not a predominantly visual medium [...] All television events happen within a specific sound environment, and it is often the sound track that lends authenticity to the pictures and not the other way around” (Zettl 2005: 328-329). Ellis, Altman, Chion, and Zettl thus identify the fundamental differences between film and television as a direct result of the fact that television privileges the ear over the eye, which aligns television more closely with radio than film.

The dramatic changes that television has undergone in recent years have encouraged critics to re-examine the function of television sound. High definition television, home theaters and 3D technology have brought the cinematic experience into the home, thereby challenging the persistent belief that television is primarily acoustic rather than visual. As Michele Hilmes points out, these changes have made it difficult to generalize about the form of televisual texts, yet Hilmes adds that television’s “streaming transmission” still requires the use of “supratextual sound,” which refers to sounds that relate “less to the specific programme text being viewed than to the supertext understood as the television viewing experience as a whole—the voice of the network announcer touting upcoming shows, the ebullient narrator of commercials, news bulletin interruptions, etc.” (Hilmes 2008: 158-159). Like Ellis, Altman, Chion, and Zettl, therefore, Hilmes argues that television sound practices are essentially derived from radio:

These are all a part of television’s live/streaming aesthetic inherited from radio. They make unusually explicit and frequent use of direct address narration – speaking to ‘you’ the viewer – compared to cinema where such direct address is rare […] [T]he title song and/or sequence, along with the ‘intros’ and ‘bumpers,’ are also derived from radio practice […] [S]uch uses of music and sound are clearly nondiegetic […] yet in television they frequently provide key information about the programme’s basic narrative situation, characters, and plot elements that are necessary for an intelligible reading of the episodic text. This kind of rapid alternation between levels of textuality…traces its roots to techniques developed in radio in the 1930s and 40s. (Hilmes 2008: 159-160)

By stressing the close affinity between television and radio, Hilmes’ argument effectively mirrors the claims made by these earlier critics: “Once we understand that television owes its most basic narrative structures, programme formats, genres, modes of address, and aesthetic practices not to cinema but to radio […] we can begin to appreciate the unique and complex narratives that television’s sonically-oriented streamed seriality has made possible” (Hilmes 2008: 160). Despite the radical technological changes that television has undergone in recent years, therefore, Hilmes ultimately reinforces the notion of television as “illustrated radio.”

While the use of voice-overs, theme songs, intros, and laugh tracks are clearly borrowed from radio practices, the relationship between televisual sounds and images is becoming increasingly complex as new layers of information content are being superimposed over television images. Consider, for example, the use of news tickers, weather tracking information, and twitter feeds, which introduce additional layers of information that are entirely distinct from the primary sounds and images associated with television programs. Pop-up windows and advertisements also provide additional layers of information, and rather than supplementing the primary text they more often serve as distractions by multiplying the number of available information streams presented on the television screen. The density of information provided by these textual features is further enhanced by the development of new media interfaces whose interactive menus provide metadata on the programs as well as schedules and search functions. With the rise of the so-called “post-television era,” which is being fueled in part by the widespread acceptance and popularity of DVRs, tablets, and smart phones, the boundaries between television and the internet are now blurring even more (see Bennett and Strange 2011). As a result, television is no longer characterized by what Hilmes calls “streaming seriality”; rather, the contemporary television viewer now experiences multiple streams of seriality at the same time. Moreover, the textual features proliferating on contemporary television screens are rarely accompanied by any sonic elements or sound effects, which fundamentally challenges the notion that television still requires the use of “supratextual sound” or that television’s primary mode of address is through speech. Television is thus becoming an increasingly multi-sensory and participatory experience that has less in common with radio than it does with computer or web-based platforms.

These recent innovations clearly demand a re-examination of television sound and the relationship between televisual sounds and images, and this paper will attempt such a re-examination by returning to Canadian media theorist Marshall McLuhan’s concept of “acoustic space.” McLuhan’s concept of “acoustic space” provides an ideal conceptual tool for understanding these new televisual environments, as it explains not only how television challenges the visual regime of other optical media, like film, but also how the multi-sensory complexity of television differs from purely acoustic media, like radio. Television is increasingly becoming a complex, immersive information space, which forces the viewer to actively participate in its construction by navigating the breaks and discontinuities between competing information streams.

McLuhan’s concept of “auditory space” was originally inspired by the Hungarian biophysicist Georg von Bekesy, who borrowed the term from the German physiologist and physicist Hermann von Helmholtz. In his 1960 book Experiments in Hearing, von Bekesy discusses the spatialization of the aural using a visual analogy:

The less the loudness, the farther away from the head the sound image seemed to be, and when the loudness remained constant this distance was always the same. However, the conditions were indeterminate as to front-back location. It was possible for a subject voluntarily to shift the image from front to back or vice-versa. These conditions have an analogy in vision in the perception of reversible perspective figures...where the shaded surface can be seen as in front of, or behind, or on the same pane with the other surfaces. (Von Bekesy 1960: 280-281)

In order to explain the difference between visual and acoustic space, von Bekesy also compared Renaissance woodcuts to Persian mosaics: while the woodcuts reflect the principle of Renaissance perspective, the mosaics illustrate the notion of “reversible perspective,” which reflects the same confusion of figure and ground associated with acoustic space (see Figure 1).

McLuhan was greatly inspired by von Bekesy’s distinction between visual and acoustic space, but he developed these terms into epistemological categories. For McLuhan, visual space not only reinforces Renaissance perspective, but it also represents a form of linear and logical thinking, which is indelibly linked to the rise of literacy and print technology:

[V]isual space structure is an artifact of Western civilization created by Greek phonetic literacy. It is a space perceived by the eyes when separated or abstracted from all other senses. As a construct of the mind, it is continuous, which is to say that it is infinite, divisible, extensible, and featureless [...] It is also connected (abstract figures with fixed boundaries, linked logically and sequentially but having no visible grounds), homogeneous (uniform everywhere), and static (qualitatively unchangeable). It is like the “mind’s eye” or visual imagination which dominates the thinking of literate Western people. (McLuhan 2004: 71)

Visual space reflects the principles of logic, in other words, because it is sequential, homogeneous, and static. McLuhan describes acoustic space, on the other hand, as “the natural space of nature-in-the-raw inhabited by non-literate people. It is like the ‘mind’s ear’ or acoustic imagination that dominates the thinking of pre-literate and post-literate humans alike […] It is both discontinuous and non-homogeneous. Its resonant and interpenetrating processes are simultaneously related with centers everywhere and boundaries nowhere” (McLuhan 2004: 71). Acoustic space thus reflects a different epistemological orientation, which is fundamentally discontinuous, non-homogeneous, fluid, and decentered.

McLuhan also illustrated the differences between visual and acoustic space using a visual analogy borrowed from von Bekesy:

The world of the flat iconic image, [von Bekesy] points out, is a much better guide to the world of sound than three-dimensional and pictorial art. The flat iconic forms of art have much in common with acoustic or resonating space. Pictorial three-dimensional art has little in common with acoustic space because it selects a single moment in the life of a form, whereas the flat iconic image gives an integral bounding line or contour that represents not one moment or one aspect of a form, but offers instead an inclusive integral pattern. (McLuhan 1966: 97)

In other words, unlike “pictorial” or “three-dimensional” art, which represents visual space, the flat or “two-dimensional” iconic image represents acoustic space because it has no fixed boundaries and no center, it defies the laws of perspective, and it is constantly changing:

[Acoustic space is] a sphere without fixed boundaries, space made by the thing itself, not space containing the thing […] [I]t is indifferent to background. The eye focuses, pinpoints, abstracts, locating each object in physical space, against a background; the ear, however, favors sound from any direction. We hear equally well from right or left, front or back, above or below. If we lie down, it makes no difference, whereas in visual space the entire spectacle is altered. We can shut out the visual field by simply closing our eyes, but we are always triggered to respond to sound […] There is nothing in auditory space corresponding to the vanishing point in visual perspective. (Carpenter and McLuhan 1960: 67-68)

While “‘rational’ or pictorial space is uniform, sequential and continuous and creates a closed world,” acoustic space has “no center and no margin” (McLuhan 1969: 59). Rather than conveying meaning through linearity and coherence, therefore, acoustic space conveys meaning through discontinuity and resonance: “[T]he two-dimensional mosaic is, in fact, a multi-dimensional world of interstructural resonance. It is the three-dimensional world of pictorial space that is, indeed, an abstract illusion built on the intense separation of the visual from the other senses” (McLuhan 1962: 43). The term “resonance” is particularly crucial to McLuhan’s understanding of acoustic space, as Richard Cavell emphasizes: “‘Resonance’ conceptualizes the break in the uniformity and continuity of space as visualized; it is a sign, in other words, of the discontinuity of acoustic space, of the fact that it produces meaning through gaps” (Cavell 2002: 23). Unlike pictorial space, which conveys a coherent meaning by privileging vision over the other senses, acoustic space thus represents the confluence of multiple streams of sensory information whose meaning is derived precisely from its discontinuities and ruptures.

According to McLuhan, the history of optical media represents a direct continuation of three-dimensional pictorial art: “Photography gave separate and, as it were, abstract intensity to the visual [...] Movies and photo-engraving created a further revolution in Western sensibilities, tending to high stress on pictorial quality in all aspects of human association” (McLuhan 2005: 44). Photography and film thus represent a continuation and reinforcement of Renaissance perspective. This history was interrupted, however, with the invention of television: “[T]here has been no respite from this growing pictorial stress till the advent of television” (McLuhan 2005: 44). Unlike film, which represents a visual space that resembles three-dimensional pictorial art, television thus represents an acoustic space that more closely resembles flat, two-dimensional iconic images. Drawing on von Bekesy’s visual analogy, McLuhan even refers to television as a “mosaic mesh”: “It is a two-dimensional mosaic mesh, a simultaneous field of luminous vibration that ends the older dichotomy of sight and sound” (McLuhan 2005: 45).

McLuhan describes television as an acoustic space because it does not provide any sense of direction or perspective, and therefore it does not follow the principle of sequence or seriality. Instead, it is based on the principle of simultaneity: “Television...deal[s] with auditory space, by which I mean that sphere of simultaneous relations created by the act of hearing. We hear from all directions at once; this creates a unique unvisualizable space. The all-at-onceness of auditory space is the exact opposite of lineality, of taking one thing at a time” (McLuhan 1963: 43). McLuhan also describes auditory space as the juxtaposition – not the integration or synthesis – of disparate elements:

[A]ny pattern in which the components co-exist without direct lineal hook-up or connection, creating a field of simultaneous relations, is auditory, even though some of its aspects can be seen [...] The items of news and advertising that exist under a newspaper dateline are interrelated only by that dateline. They have no interconnection of logic or statement. Yet they form a mosaic […] whose parts are interpenetrating … It is a kind of orchestral, resonating unity, not the unity of logical discourse. (McLuhan 1963: 43)

McLuhan’s description of the “orchestral, resonating unity” of the newspaper clearly echoes his earlier description of the “jazzy, ragtime discontinuity” of the front page of The New York Times, which he compares to the “visual technique of a Picasso” and the “literary technique of James Joyce” (McLuhan 1951: 3).

In a letter to fellow media theorist Harold Innis, McLuhan similarly describes acoustic space as “the discontinuous juxtaposition of unrelated items.” Because it represents the juxtaposition of multiple sensory channels, acoustic space does not privilege either the ear or the eye; rather, it represents the interplay of all the senses. This is precisely what McLuhan means when he refers to the “tactility” of television: “It’s two-dimensional, contoured character fosters the tactile interplay of the senses […] Television is not just sight and sound, but tangibility in its visual, contoured, sculptural mode” (McLuhan 2005: 46-47). Because the acoustic space of television engages multiple senses, the act of viewing television also requires active participation. Television promotes “in-depth involvement” because the viewer is engaged in a “constant creative dialog with the iconoscope” (McLuhan 1969: 61). In other words, television immerses the viewer in a confusing, disorienting, and information-rich space, and the viewer’s active involvement and engagement is required in order to navigate this space: “By requiring us to constantly fill in the spaces of the mosaic mesh […] the viewer, in fact, becomes the screen, whereas in film he becomes the camera” (McLuhan 1969: 61). In order to emphasize this notion of television as an immersive environment, McLuhan even describes the television viewer as a “skin diver” (McLuhan 2005: 44).

McLuhan’s notion of television as acoustic space thus offers an alternative to Raymond Williams’ famous description of “programming flow,” which was based on the premise that television represents a linear sequence of isolated and incoherent fragments (Williams 1975: 86). While McLuhan agrees that television programming is fundamentally incoherent, he attributes this incoherence to the presence of parallel information streams rather than the linear arrangement of discrete fragments. In contrast to Williams, therefore, McLuhan argues that it is not the “liveness” of television that distinguishes it from other media, but rather its “all-at-onceness.” Instead of arguing that the television soundtrack helps the viewer to navigate and make sense of “programming flow,” therefore, McLuhan proposes that the television environment is fundamentally non-linear and multisensory, and it thus reflects the immersive qualities of sound itself.

One of the earliest attempts to present textual information on the television screen was the development of teletext, an information retrieval service that offered a wide range of text-based information, including news, sports, weather reports and programming schedules. The BBC introduced the first teletext system, now known as “Ceefax” (short for “see facts”) in 1972. Ceefax was initially limited to 30 pages of text, but when it was formally launched in 1976 it had already been expanded to 100 pages. By the mid 1980s Ceefax was broadcasting several hundred pages, which were constantly updated throughout the day, and built-in decoders were available as an option on nearly all European television sets (for more on Ceefax, see Thomas 2011: 61-62). The first teletext service in North America was launched by station KSL in Salt Lake City in 1978. CBS subsequently performed their own preliminary tests using both the British teletext system and the French Antiope system. The most prominent American teletext service was Electra, which was broadcast on cable channel WTBS in the early 1980s. However, teletext failed to attract a sufficient number of users in the US, and the Electra teletext service was eventually discontinued in 1993.

Although teletext is rarely discussed in most histories of television (Jensen 2008 is a rare exception), it represents perhaps the earliest example of a new conception of television as an electronic newspaper, which predated the internet by several decades. Each page of teletext data includes fragments of information, such as news headlines, stock prices, weather forecasts, and advertisements, which are not connected by any logic except the time and date of the transmission. Like the newspaper, therefore, teletext represents a non-linear mosaic of discontinuous elements, and it clearly refutes Chion’s claim that “silent television is inconceivable” (Chion 1994: 165).

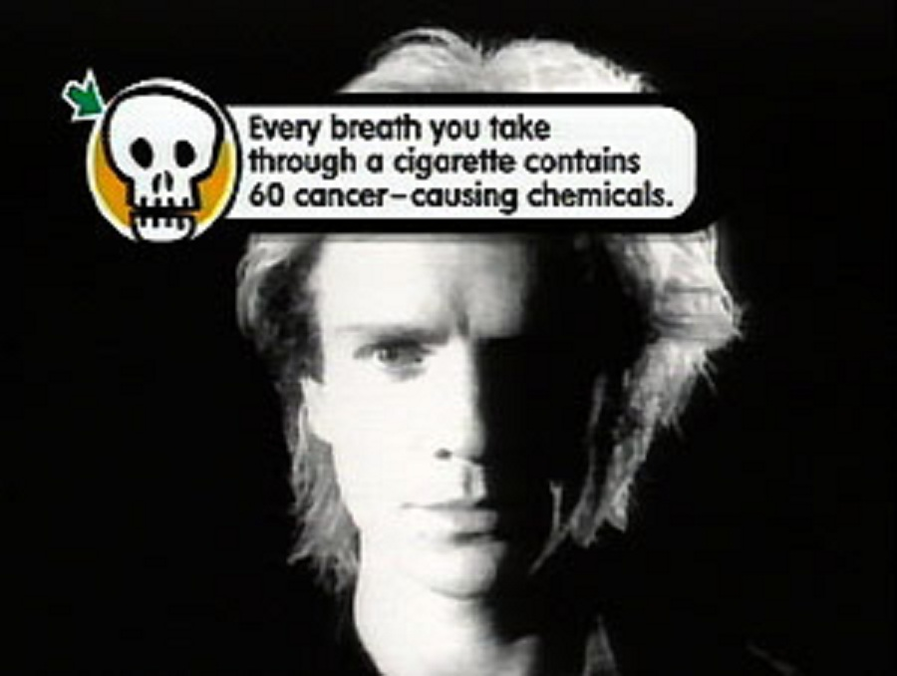

Another development that brought more textual information to the television screen was the use of news tickers, which are also known as “scrolls,” “crawlers,” or “slides.” The first tickers appeared in the northern parts of the US in the early 1980s, when local and network newscasts began featuring information on school schedule changes and the cancellation of school bus services due to inclement weather. Severe weather warnings were also featured on local station tickers, and in the beginning these tickers were often accompanied by a warning tone or the use of theme music. On NBC, for example, weather tickers were initially accompanied by the same chimes that the network used as its official audio logo or sonic signature. In the mid 1980s ESPN also introduced a sports ticker that would feature up-to-the-minute sports scores at the top or bottom of the screen every half hour. In the late 1980s CNN Headline News became the first television network to feature a continuously running ticker on the bottom of the screen. The CNN ticker initially featured stock prices during business hours, but in 1992 it was combined with the “HLN Sports Ticker,” which became the first continuous 24-hour ticker on television. The attacks on September 11, 2001 played a particularly significant role in encouraging the widespread use of news tickers. On the morning of September 11, Fox News introduced a continuous ticker at the bottom of the television screen in order to provide emergency information to viewers. CNN and MSNBC introduced similar tickers soon after. Although the need to report emergency information lasted only a few weeks, all three networks eventually decided that these tickers would help increase viewership, particularly among viewers with the ability to process multiple simultaneous streams of information. As a result, tickers soon became a standard feature of television news.

Of course none of these textual features were entirely new, as sports scores, election results, and closed captioning were all long-standing practices that involved the presentation of textual information on television screens. In recent years, however, the number of such features has multiplied dramatically, fueled in part by the 24-hour news cycle, the rise of electronic trading, and the increased size of television sets (see Cushion and Lewis 2010).

Another feature that brought more textual information to the television screen was the use of pop-up windows and advertisements. One of the earliest examples of the inclusion of pop-up windows was featured on Pop Up Video (1996-), a television program broadcast on VH1 that showed music videos along with word balloons – officially known as “info nuggets” – containing background information, entertainment news, pop culture trivia, jokes, and other forms of commentary that were often unrelated to the videos themselves (see Burns 2004).

Pop-up advertisements are overlays that usually appear at the bottom of the television screen. These “banners” or “logo bugs,” as they are also known, are similar to news tickers, although they are not continuous and they often block out part of the image (in some cases, they take up as much as 25 percent of the available screen space).

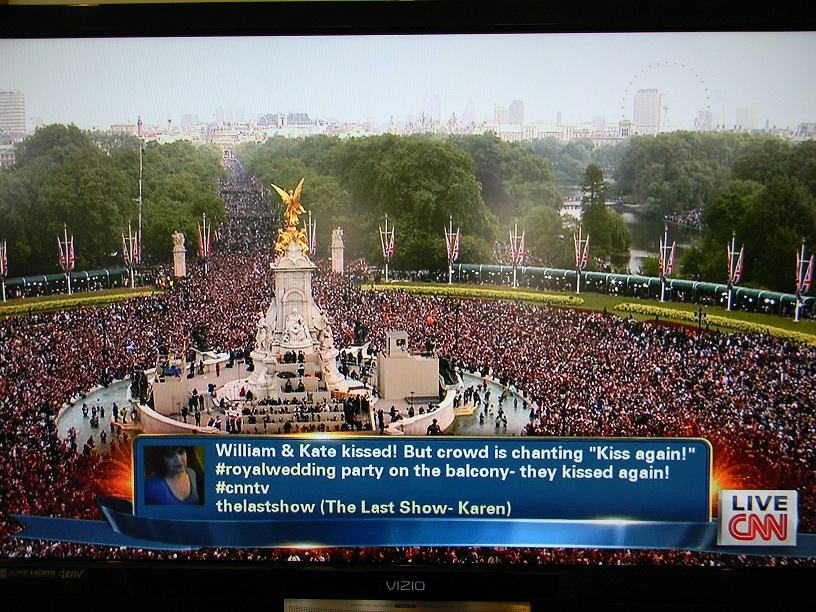

Another example of how television is increasingly featuring more textual information is the incorporation of twitter feeds. While twitter feeds are often incorporated into television news coverage of various events, some networks have begun to introduce continuously streaming twitter feeds during television programs.

One event that especially helped to promote the integration of television and twitter was the royal wedding of Prince William and Kate Middleton on April 29, 2011. Viewers were invited to interact with the ABC News’ coverage of the event by using the hashtags #RoyalSuccess and #RoyalMess. Viewers could also share their thoughts and opinions with CNN by including the hashtag #CNNTV in their tweets. As a result, viewers around the world posted millions of tweets during the broadcast, and the twitter traffic peaked at roughly 16,000 tweets per minute.

Samsung has also introduced a new application called “TV Twicker,” which is designed to enable the complete integration of television and twitter:

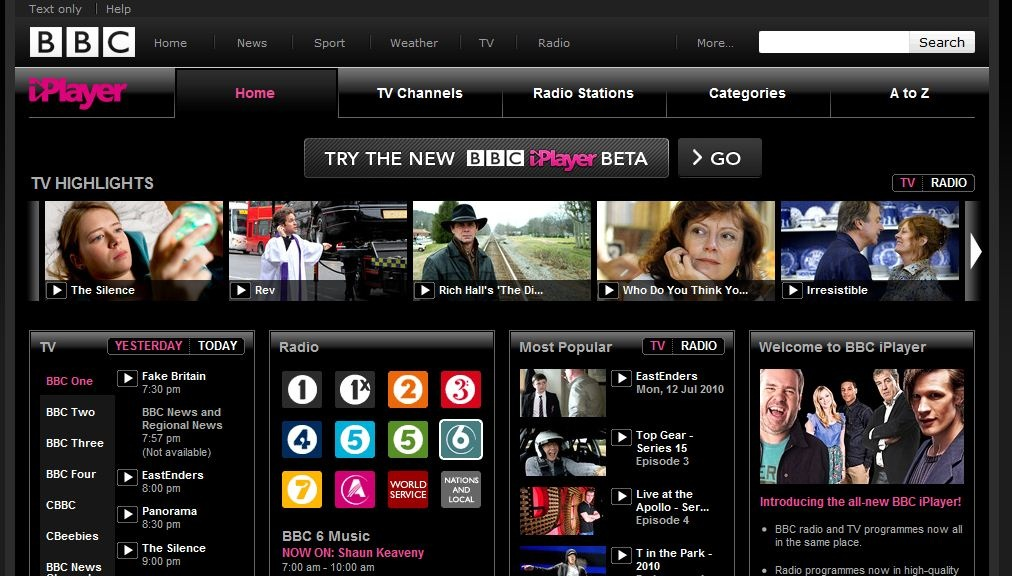

Perhaps the most significant recent development in the distribution and reception of television programming is the rise of internet television and video on demand services, which allow viewers to interact with the internet through the television screen. The earliest version of internet television was Web TV, which was launched in 1996. Web TV allowed viewers to check e-mail, surf the internet, and watch streaming video through the television set, although it required dial-up modem access. Microsoft purchased Web TV in 1999, and in 2001 it was relaunched as MSN TV. Microsoft also partnered with Rogers Cable to introduce “Rogers Interactive TV,” which was the first broadband implementation of MSN TV. The Homechoice service also began providing a 60-channel streaming television service using broadband in 2003, but download speeds were still quite slow. As broadband speed increased, the BBC began developing their “interactive media player” or iPlayer, which was released in 2007. This service was tremendously successful due to its ease of use, high-quality content, and constant updates (see Bennett and Strange 2011: 1-2; Evans 2011: 45-47).

As a result of the success of iPlayer, ITV, Channel 4, and Channel 5 soon introduced their own video on demand services. Other internet television providers include Hulu in the US, Nederland 24 in the Netherlands, ABC iview and Australia Live TV in Australia, and Tivibu in Turkey.

As a result of these innovations, the television screen has increasingly become a vast field of multisensory data, including images, sounds, and texts, and viewers are now expected to learn how to process multiple simultaneous streams of information and navigate the often complex media interfaces that provide metadata on programming content. This gradual integration of television and the internet clearly supports McLuhan’s notion of television as an acoustic space. First, the increasing use of textual features reinforces the notion of television as a flat, two-dimensional surface that defies Renaissance perspective and reflects the spatial qualities associated with hearing. Second, the juxtaposition of optical, acoustic, and written information provides an ideal example of what McLuhan describes as the “simultaneous field of relations,” as television is increasingly becoming an assemblage of parallel but not converging information streams. Third, the discontinuous juxtaposition of simultaneous yet unrelated information streams effectively forms a mosaic mesh, where the relationship between figure and ground is constantly shifting and changing. Fourth, meaning is generated not through the isolation of a single continuous information stream or sensory channel, but rather through the resonances created through the gaps and discontinuities between information streams. Finally, because the relationship between figure and ground is dependent on the choices made by the viewer, television audiences now play an increasingly actively role in constructing the meaning of televisual texts. In some cases, such as the royal wedding in 2011, the activities performed by viewers even become part of the televisual text itself.

McLuhan’s notion of television as an acoustic space is particularly relevant today because it provides an ideal description of the inherent connections between television and networked environments. There is a direct correspondence, for example, between the “all-at-onceness” of television and the kinds of media interfaces developed for computer and web-based platforms. As Tara McPherson argues, web browser navigation provides the user a sense of choice and feedback: “Choice, personalization, and transformation are heightened as experiential lures, accelerated by feelings of mobility and searching, engaging the user’s desire along different registers” (McPherson 2006: 206). Daniel Chamberlain points out that graphic user interfaces similarly offer the promise of “personalization and control” (Chamberlain 2011: 236), and these “media interfaces can be considered the equivalent of the spatial environment in that they constitute the ontological realm of reception” (Chamberlain 2011: 242). Chamberlain thus argues that new media interfaces have gradually become an inherent part of the television experience, and the rise of networked environments thus requires scholars to reexamine the aesthetics of televisual texts.

The rise of new media interfaces particularly requires a reexamination of the relationship between televisual sounds and images, as these interfaces directly challenge many of the assumptions underlying previous studies of television sound. This paper provides one example of how sound-image relations are changing by examining the recent explosion of textual features on television screens. As a result of this dramatic increase in the number of information streams available on television and the different kinds of information they provide, discontinuity has gradually become more prevalent than continuity, simultaneity is gradually displacing seriality, and the meaning of televisual texts is increasingly based on the principle of resonance rather than convergence. Concepts like “diegetic” and “non-diegetic” are largely irrelevant in the dense, information-rich environment of television, and audience participation is becoming more important as users are asked to navigate television in the same way they browse the internet.

Given this situation, it is impossible to conclude that television sound anchors the meaning of the images or that television represents a form of “illustrated radio.” It is also impossible to conclude that the rise of home entertainment systems has transformed television sound into a supplement of the image, like film. Rather, McLuhan’s notion of acoustic space provides a conceptual tool for understanding the media-specific properties of television without relying on comparisons to either radio or film. The spatial qualities of television clearly reflect the non-directionality, unboundedness, and simultaneity of hearing, yet these qualities are a product of the juxtaposition of simultaneous multisensory inputs that do not privilege the ear over the eye or the eye over the ear. In other words, television represents a uniquely hybrid medium whose meaning is to be found not in the convergence of information streams, but rather in the resonating unity generated by the gaps and ruptures between them. Even when television makes no sound at all, its underlying structure remains fundamentally acoustic rather than visual.

Altman, Rick (1986). “Television/Sound.” In Tania Modleski (ed.), Studies in Entertainment: Critical Approaches to Mass Culture (pp. 39-54). Bloomington, IN: Indiana University Press.

Bennett, James, and Niki Strange, eds. (2011). Television as Digital Media. Durham, NC: Duke University Press.

Burns, Gary (2004). “Pop Up Video: The New Historicism.” Journal of Popular Film and Television 32/2: 74-83.

Carpenter, Edmund, and Marshall McLuhan (1960). “Acoustic Space.” In Edmund Carpenter and Marshall McLuhan (eds.), Explorations in Communication: An Anthology (pp. 65-70). Boston, MA: Beacon Press.

Cavell, Richard (2002). McLuhan in Space: A Cultural Geography. Toronto: University of Toronto Press.

Chamberlain, Daniel (2011). “Scripted Spaces: Television Interfaces and the Non-Places of Asynchronous Entertainment.” In James Bennett and Niki Strange (eds.), Television as Digital Media (pp. 230-254). Durham, NC: Duke University Press.

Chion, Michel (1994). Audio-Vision: Sound On Screen (ed. and trans. Claudia Gorbman). New York: Columbia University Press.

Cushion, Stephen, and Justin Lewis (2010). The Rise of 24-Hour News Television. New York: Peter Lang.

Ellis, John (1982). Visible Fictions: Cinema, Television, Video. London: Routledge.

Evans, Elizabeth (2011). Transmedia Television: Audiences, New Media, and Daily Life. New York: Routledge.

Hilmes, Michele (2008). “Television Sound: Why the Silence?” Music, Sound, and the Moving Image 2/2: 153-161.

Jensen, Jens F. (2008). “Interactive Television: A Brief Media History.” Lecture Notes in Computer Science 5066: 1-10.

McLuhan, Marshall (1951). The Mechanical Bride: Folklore of Industrial Man. New York: Vanguard Press.

McLuhan, Marshall (1962). The Gutenberg Galaxy: The Making of Typographic Man. Toronto: University of Toronto Press.

McLuhan, Marshall (1963). “The Agenbite of Outwit.” Location 1/1: 41-44.

McLuhan, Marshall (1966). “Cybernation and Culture.” In Charles R. Dechert (ed.), The Social Impact of Cybernetics (pp. 95-108). Notre Dame, IN: University of Notre Dame Press.

McLuhan, Marshall (1969). “Playboy Interview: Marshall McLuhan.” Playboy: 53-74, 158.

McLuhan, Marshall (2004). “Visual and Acoustic Space.” In Christoph Cox and Daniel Warner (eds.), Audio Culture: Readings in Modern Music (pp. 67-73). New York: Continuum.

McLuhan, Marshall (2005). “Inside the Five Sense Sensorium.” In David Howes (ed.), Empire of the Senses: The Sensual Culture Reader (pp. 43-52). Oxford: Berg.

McPherson, Tara (2006). “Reload: Liveness, Mobility, and the Web.” In Wendy Hui Kyong Chun and Thomas W. Keenan (eds.), New Media, Old Media: A History and Theory Reader (pp. 199-208). London: Routledge.

Thomas, Julian (2011). “When Digital Was New: The Advanced Television Technologies of the 1970s and the Control of Content.” In James Bennett and Niki Strange (eds.), Television as Digital Media (pp. 52-75). Durham, NC: Duke University Press.

Von Bekesy, Georg (1960). Experiments in Hearing (trans. E. G. Wever). New York: McGraw-Hill.

Williams, Raymond (1975). Television: Technology and Cultural Form. New York: Schocken Books.

Zettl, Herbert (2005). Sight Sound Motion: Applied Media Aesthetics. Belmont, CA: Thomson Wadsworth.