This page documents the work developed and exposed in Bergen. It includes preparational sketches, a video recording of the piece, and a description of its "experimental" setup.

{function: contextual}

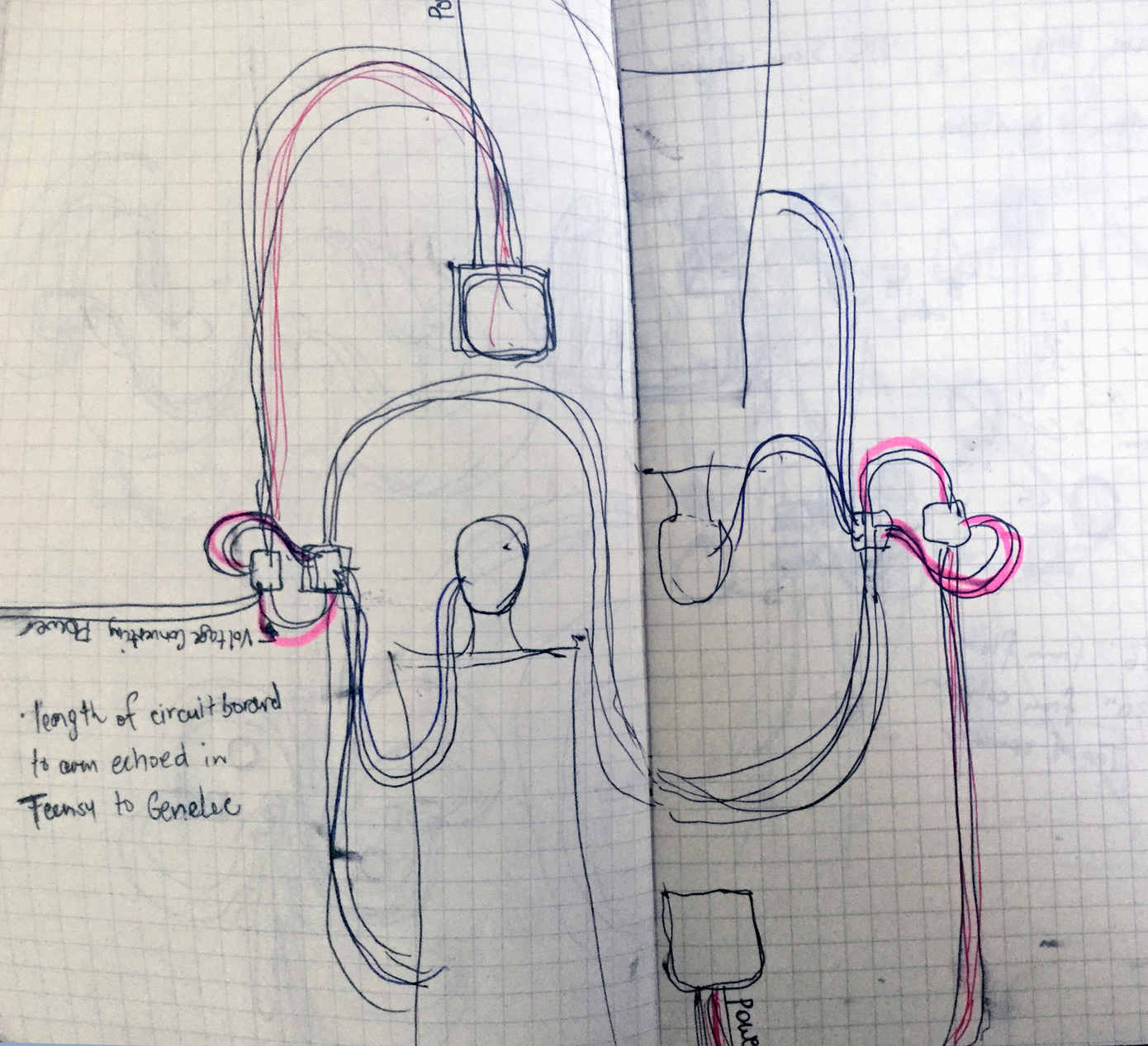

I was careful to plan out exactly how the cables and wires would overlap and intersect, what the relationship of the human body to the circuitry might be. The wires have potential to draw a nice analogy to veins or cybernetic extensions, looking elegant or organic, I didn't want this to be disturbed by a thoughtless assembly.

{kind: caption, artwork: PinchAndSoothe, keywords: [sketch, sketchbook, wires, installation, BioSynth]}

After I made the first drawing I could see the potential of this new setup, and I started sketching out how the wires could connect in a way that preserved the aesthetic I had in my head for the installation. So the interrelationships of the wires were determined by the bodies of the printed circuit boards themselves but I was improvising the ways that the two boards could interconnect. You can see here some loose plans for the Genelec powered speakers.

{kind: caption, artwork: PinchAndSoothe, keywords: [sketch, sketchbook, wires, installation, BioSynth]}

Later during the installation in Bergen I became more interested in responding to the architecture of the space (an available column, really) so I made a design for seated participants, still trying to preserve the long swooping lines that the wires made in space. The first drawing was a quick sketch to demonstrate the bodily relationships of the participants

{kind: caption, artwork: PinchAndSoothe, keywords: [sketch, sketchbook, wires, installation, BioSynth]}

Short video explaining sensors and documentation of final experiment, "pinch and soothe"

{kind: caption}

My first sketches involved a setup where participants would lie on the floor - I liked the positioning of their heads beside each other, it's something I remember as really intimate and touching as a position when I was a child and would lie in the grass with someone in this way.

{kind: caption, artwork: PinchAndSoothe, keywords: [sketch, sketchbook, wires, installation, BioSynth]}

---

meta: true

author: EG

function: documentation

artwork: pinchAndSoothe

keywords: [installation, physiological data, emotions, sonification, sensor, biosensors, biosignals]

---

{EG, 15-Aug-2018}

The improvements made to the algorithms during the residency made them more computationally efficient, which would enable me to run multiple signals into the same microcontroller. This makes me think of "clusters" of users, how this design could be a way to visually arrange the togetherness into a larger organic structure.

I think anything beyond 12 right now sounds a bit musically daunting, but the signal treatment I developed during this residency could be "tuned" into specific ranges for interesting sonic effects.

Electroluminescent wire could be used to help people listen to individual signals, because this visual feedback would become more important as the sounds became more complex. (How to find yourself as a simple sonic signal in a dense network?)